How To Build A RAG System Companies Actually Use: From Proof-of-Concept to Production

Build production-ready RAG systems that actually work. Learn why 70% fail and the strategies to prevent costly mistakes at scale.

Every week, another company announces their new AI initiative powered by RAG (Retrieval-Augmented Generation). Yet according to recent data, 70% of RAG implementations fail to deliver on their promises. They struggle with accuracy, scalability, latency, or produce inconsistent results unsuitable for production use.

The difference between a demo that impresses executives and a system that handles real customer queries at scale? Understanding what makes RAG systems break — and building to prevent those failures from day one.

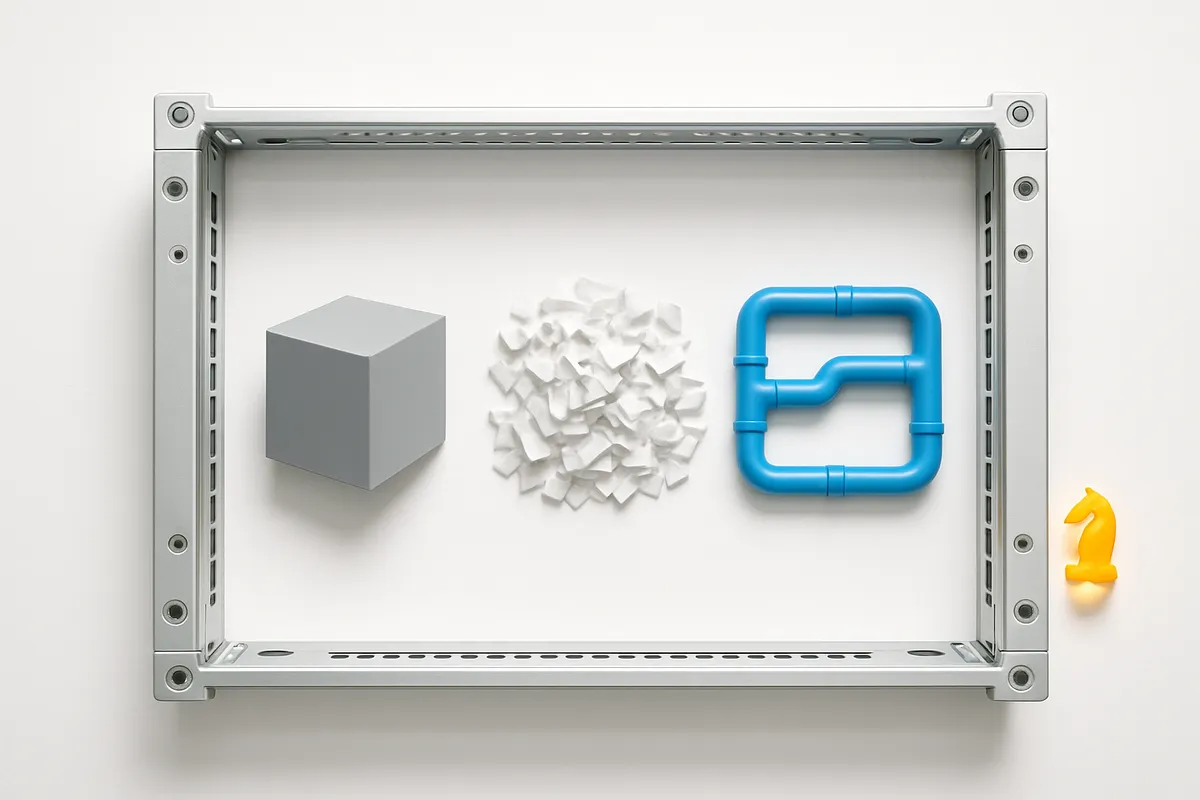

The RAG Reality Check

Put simply: RAG combines the intelligence of large language models with your company's specific knowledge. Instead of training a custom AI model (expensive and time-consuming), you give an existing model like GPT-4 or Claude access to your documents, databases, and internal knowledge.

Think of it as hiring a brilliant consultant who can instantly access and understand every document in your company. The promise is compelling — accurate, contextual answers based on your actual data.

Real numbers: Building a custom LLM can cost $2-10 million and take 6-12 months. A well-implemented RAG system can be production-ready in 4-8 weeks for $50-200k.

But here's where companies stumble. They see a demo where the system correctly answers "What's our refund policy?" and rush to production. Six months later, the system confidently tells customers incorrect information, retrieves irrelevant documents, or takes 30 seconds to respond.

Why RAG Systems Fail in Production

The Data Quality Trap

According to industry analysis, the primary reason RAG systems fail is treating data preparation as a one-time task. Teams dump documents into a vector database and call it done.

What actually happens: Your PDF processing misses tables. Your HTML scraper includes navigation menus in product descriptions. Your chunking algorithm splits critical context across boundaries.

As noted in production case studies, successful systems treat data preparation as a continuous, sophisticated pipeline. They implement semantic chunking that preserves context boundaries rather than arbitrary character limits. This means analyzing document structure and ensuring each chunk contains complete thoughts.

Here is what we recommend: Before processing 10,000 documents, manually review 100. Look for edge cases — tables, code blocks, footnotes. Build your pipeline to handle these correctly from the start.

The Latency Problem Nobody Talks About

RAG introduces additional latency because of the retrieval step. As your document corpus grows, retrieval slows down, especially if the underlying infrastructure cannot scale adequately.

Honest take: That sub-second response time in your demo? Add network latency, database lookup, embedding generation, and LLM processing. Suddenly you're at 5-10 seconds per query.

According to IBM's research, successful implementations use these strategies:

- Vector quantization to reduce the dimensionality of vector representations

- Pre-filtering with keyword search before dense retrieval to reduce search space

- Semantic caching for frequently asked questions

- Multi-stage ranking with fast initial retrieval followed by precise reranking

Key takeaway for business: Every second of latency costs conversions. Budget for proper infrastructure from the start, not as an afterthought.

The Retrieval Quality Crisis

Your RAG system is only as good as the documents it retrieves. According to Databricks' analysis, "My retriever is returning irrelevant documents" is one of the top five challenges teams face.

The root cause? Most teams use a single retrieval strategy for all queries. But different questions need different approaches:

- Technical specifications need exact keyword matches

- Conceptual questions benefit from semantic similarity

- Multi-step problems require retrieving related documents

As noted in production implementations, hybrid search combining dense embeddings with sparse vectors (like BM25) significantly improves retrieval quality. Tools like Qdrant and Weaviate support this out of the box.

What this means for your project: Don't commit to a vector database based on marketing. Test with your actual data and query patterns.

Building RAG That Actually Works

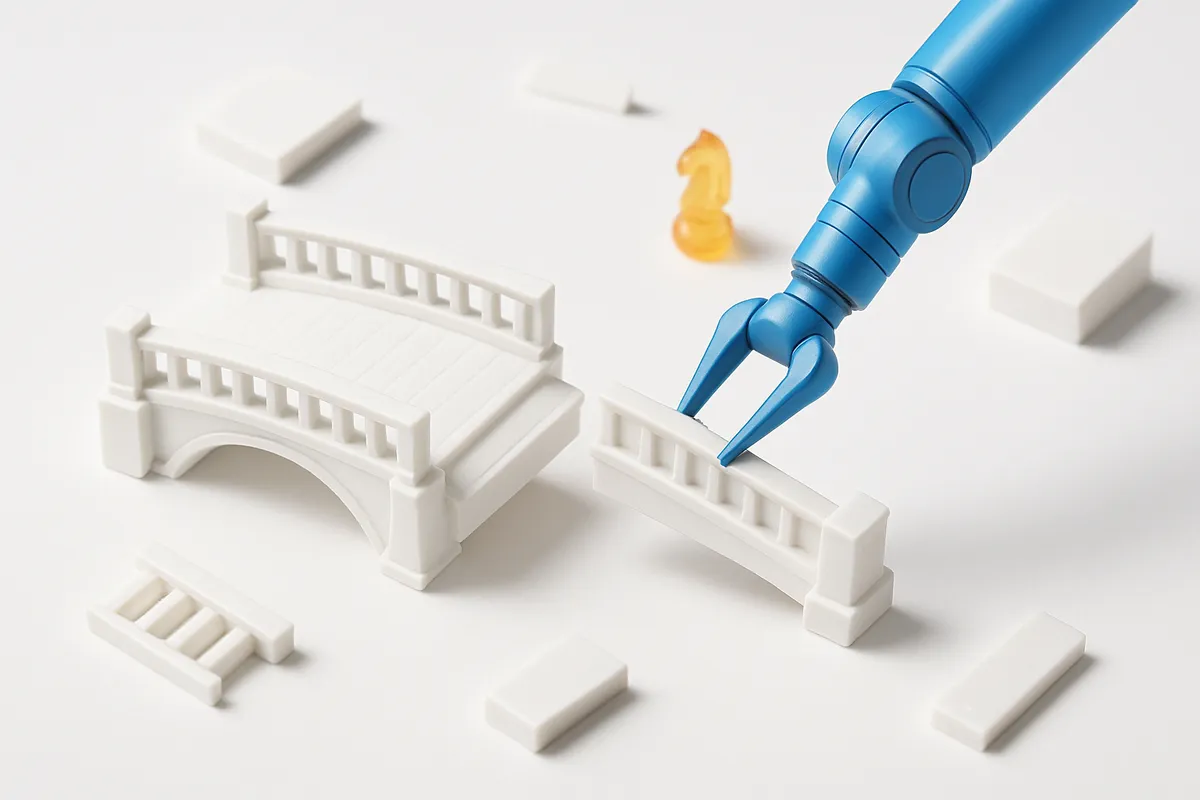

Start With the Right Architecture

Based on analysis of successful production systems, every enterprise RAG system needs four mandatory layers:

- Data Layer: Not just storage, but intelligent processing

- Model Layer: Embeddings, retrieval, and generation models

- Deployment Layer: Scalable infrastructure with proper monitoring

- Application Orchestration: Managing the complex pipeline

According to recent frameworks analysis, teams can choose between custom code (full control) or frameworks like LangChain and LlamaIndex (faster development). The choice depends on your team's expertise and customization needs.

In our experience with 50+ projects: Start with frameworks for prototyping, but be prepared to replace components with custom code as you scale. Frameworks help you learn what you need, but production often requires fine-tuned control.

The Data Pipeline That Makes or Breaks You

Successful RAG implementations share common data practices:

Advanced Chunking Strategies: As noted in production guides, technical content requires keeping code blocks intact and using sensible separators with overlap. Financial documents might need table-aware processing. Legal texts require preserving citation context.

Continuous Quality Monitoring: According to case studies, production systems implement separate LLM calls to verify that generated responses are grounded in retrieved context. If validation fails, they regenerate or escalate to humans.

Dynamic Query Processing: Medium's analysis highlights that dynamic query re-weighting based on user context dramatically improves retrieval depth. This means your system learns which terms matter most for different user types.

Performance Optimization That Matters

Token optimization isn't just about cost — it's about latency and accuracy. Production systems compress retrieved context by:

- Removing redundancy across retrieved chunks

- Using extractive summarization for long passages

- Eliminating unnecessary formatting and metadata

According to performance studies, these optimizations can reduce token usage by 40-60% while maintaining or improving answer quality.

Real numbers: A financial services company reduced their per-query cost from $0.15 to $0.06 while improving response time from 8 seconds to 3 seconds through systematic optimization.

Security and Compliance — The Hidden Complexity

As Databricks notes, RAG apps can retrieve "sensitive data that users should not have access to." This isn't a bug — it's an architectural challenge.

Production systems implement:

- Document-level access controls synchronized with source systems

- Query logging for compliance without storing sensitive data

- Result filtering based on user permissions

- Audit trails for data lineage

Honest take: Security isn't an add-on feature. If you're handling sensitive data, build access controls into your initial architecture or prepare to rebuild later.

Implementation Strategies That Work

Choose Your Tools Wisely

Based on tool ecosystem analysis:

Vector Databases: Pinecone for managed solutions, Chroma for experimentation, Weaviate or Qdrant for hybrid search needs. Your choice depends on scale, budget, and control requirements.

Embedding Models: OpenAI's text-embedding-ada-002 for general use, Sentence Transformers for specialized domains. Test with your actual content — domain-specific models often outperform general ones.

LLM Selection: GPT-4 and Claude for complex reasoning, smaller models for simple extraction. The key is matching model capabilities to task requirements.

The Testing Strategy Everyone Skips

According to production case studies, successful teams implement:

- Golden Dataset Testing: 100-500 manually verified question-answer pairs

- Regression Testing: Ensuring improvements don't break existing functionality

- Load Testing: Simulating concurrent users to identify bottlenecks

- Adversarial Testing: Attempting to make the system provide incorrect information

Key takeaway for business: Testing isn't optional. Budget 30-40% of development time for comprehensive testing, or budget 300% for fixing production issues.

Making RAG Work for Your Business

Start Small, Scale Smart

The teams that succeed with RAG follow a predictable pattern:

- Pilot Project: 100-500 documents, single use case, limited users

- Measured Expansion: Add documents and use cases based on success metrics

- Production Scaling: Full implementation with proper infrastructure

This approach lets you learn your data's quirks, understand user behavior, and build team expertise without betting the company.

Budget for Reality, Not Demos

Real numbers from production implementations:

- Initial development: $50-200k

- Infrastructure (first year): $20-100k depending on scale

- Maintenance and updates: 20-30% of initial cost annually

- Data preparation often takes 40-60% of total development time

Success Metrics That Matter

According to production implementations, track:

- Retrieval Precision: Are the right documents being found?

- Answer Accuracy: Verified against your golden dataset

- Response Time: 95th percentile, not average

- User Trust: Through feedback and usage patterns

- Cost per Query: Including all infrastructure and API costs

What this means for your project: Define success metrics before building. "It should answer questions correctly" isn't a metric — "95% accuracy on our golden dataset with <3 second response time" is.

The Path Forward

Building a RAG system companies actually use requires understanding that the demo is just the beginning. Success comes from:

- Treating data quality as an ongoing process, not a one-time task

- Building for latency and scale from day one

- Implementing proper testing and monitoring before production

- Choosing tools based on your actual needs, not marketing promises

Here is what we recommend: Start with a focused pilot project. Measure everything. Be prepared to iterate on your data pipeline more than your model selection. Build security and compliance into your architecture, not as an afterthought.

The 30% of RAG implementations that succeed share one trait: they respect the complexity while maintaining focus on business value. Your knowledge base might contain millions of documents, but if the system can't answer your customers' top 10 questions accurately and quickly, you haven't built something companies actually use.

Put simply: RAG isn't about the technology — it's about reliably connecting your users with the information they need. Everything else is implementation details.

Frequently Asked Questions

How do you evaluate whether a RAG system is actually working for your real use case?

Create a golden dataset of 100-500 real questions from your domain with verified answers. Test your system against this dataset weekly, tracking precision, recall, and response accuracy. Also monitor user feedback and query logs to identify patterns where the system fails.

What's the best way to chunk and store documents for enterprise-scale RAG systems with 20K+ documents?

Use semantic chunking that respects document structure — keeping tables, code blocks, and related paragraphs together. Implement overlap between chunks (10-20%) to preserve context. Store both the chunks and document metadata in your vector database, enabling filtered searches by document type, date, or department.

How do you prevent a RAG system from giving confident but incorrect answers that destroy user trust?

Implement answer validation through a separate LLM call that verifies responses against retrieved sources. Include confidence scores in your pipeline and set thresholds for human escalation. Train your generation prompts to express uncertainty when the retrieved context doesn't fully support an answer.