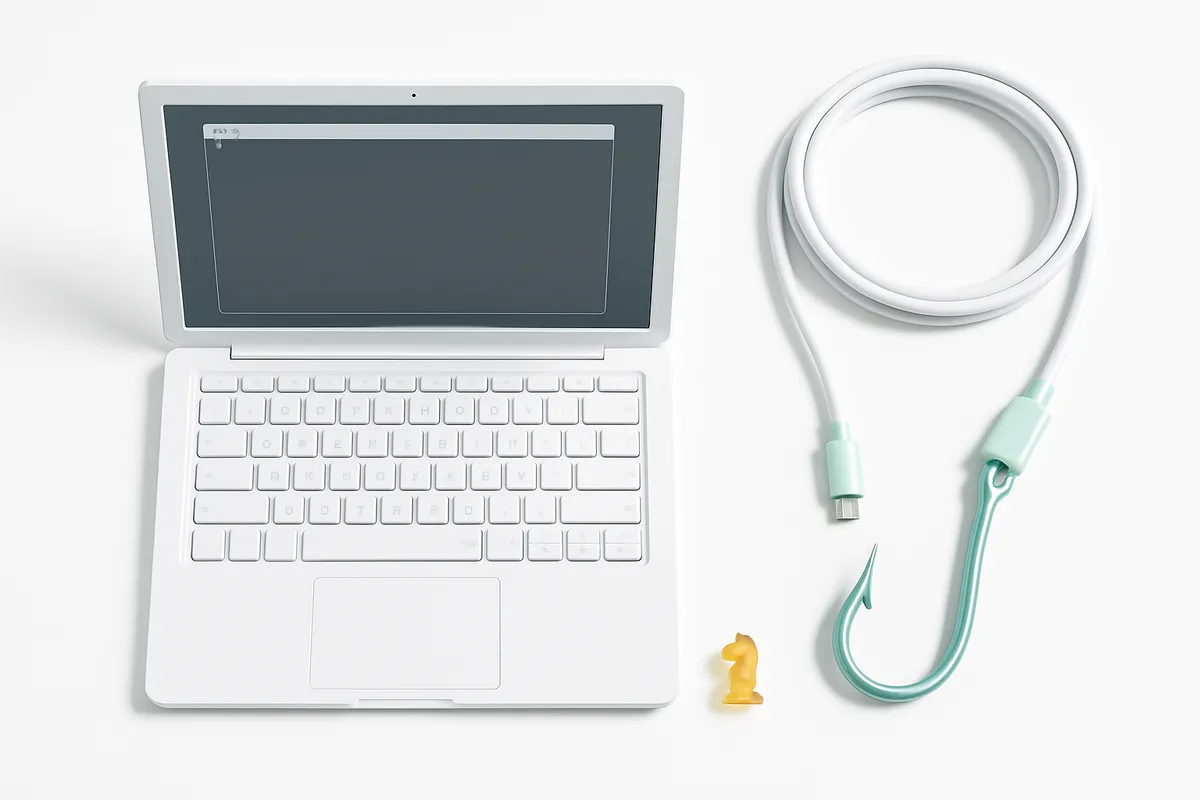

MCP Server Security: What 1,808 Audited Servers Reveal About AI Tool Integration Risks

Discover critical security risks in AI tool integration: 66% of 1,808 MCP servers audited have flaws. Learn what threats lurk in your AI agent connections.

The Problem: AI Agents Are Only as Secure as Their Weakest Tool Connection

Every team rushing to connect AI agents to databases, APIs, and file systems through Model Context Protocol (MCP) servers faces a hidden risk. MCP servers act as middleware between large language models and external tools — and when that middleware is misconfigured or malicious, the entire chain is exposed. Research by the Backslash Security team across thousands of publicly available MCP servers found hundreds with critical security flaws, including arbitrary command execution and network-wide exposure. A broader industry scan of 1,808 MCP servers revealed that 66% had security findings, with 427 classified as critical — spanning tool poisoning, toxic data flows, and unchecked code execution.

Put simply: two out of three MCP servers in the wild have at least one security problem, and nearly a quarter carry vulnerabilities that could hand over system-level access to an attacker.

Why This Matters for Any Business Using AI Agents

MCP is becoming the standard way AI agents interact with the real world — reading files, querying databases, calling APIs, triggering workflows. As Contrast Security explains, upon startup an MCP client queries configured servers to retrieve available tools and descriptions, then the AI uses those tools however it sees fit. That flexibility is the entire point — and also the entire risk.

A compromised MCP server doesn't just leak data. It can instruct an AI agent to execute arbitrary commands, exfiltrate credentials, or manipulate outputs that downstream systems trust implicitly. The blast radius is fundamentally different from a traditional API vulnerability because the AI agent acts autonomously, often without explicit human approval for each operation.

Real numbers: 427 critical findings across 1,808 servers means roughly 1 in 4 servers carries a vulnerability severe enough to allow system takeover, data theft, or supply chain compromise.

The Three Most Dangerous Vulnerability Classes

Tool Poisoning: Trojan Horses Inside Your AI Workflow

Tool poisoning is one of the most insidious MCP attacks. According to Practical DevSecOps, malicious commands are embedded in tool definitions or metadata, tricking the LLM into executing harmful actions. The tool looks legitimate on the surface — its name, description, and parameters all appear normal — but hidden instructions in the metadata redirect the AI agent's behavior.

Invariant Labs' MCP-Scan was built specifically to detect this pattern, where innocuous tools smuggle in malicious code like a trojan horse. The gap between what a tool claims to do and what it actually does is where the real danger lives.

As Enkrypt AI notes, traditional code scanners catch basic issues like SQL injection or XSS but miss the vulnerabilities that actually matter in MCP servers — because they don't understand AI agent behavior patterns, prompt injection vectors, or the unique trust boundaries that emerge when LLMs orchestrate system access.

Toxic Data Flows: When Untrusted Input Moves Through Trusted Channels

MCP servers sit between untrusted external sources and privileged internal systems. When input validation is weak or absent, attacker-controlled data flows directly into databases, command shells, or downstream APIs.

Prompt.security's analysis of the top 10 MCP security risks highlights that if MCP servers pass unvalidated user or external inputs to underlying databases or system commands, attackers exploit these vulnerabilities to execute malicious code, gain unauthorized access to infrastructure, and manipulate or delete critical data. This includes classic SQL injection and command injection — but amplified by the fact that the AI agent itself is constructing the queries based on potentially poisoned context.

CyCognito's research adds another dimension: MCP servers must never accept access tokens from clients and pass them directly to downstream APIs without validation. This breaks core security boundaries and prevents the server from applying rate limiting, auditing, and claim verification. Token passthrough essentially turns the MCP server into an unmonitored proxy for impersonation.

Arbitrary Code Execution: The Keys to the Kingdom

The Backslash research team found that excessive permissions and OS injection were the second most common issue across audited MCP servers, with dozens of instances where servers allow arbitrary command execution on the host machine — through careless use of subprocess calls, lack of input sanitization, or path traversal bugs.

Honest take: a function that takes a string and executes it as a shell command on the system is not a tool — it's an open door. When an AI agent can be tricked into calling that function with attacker-controlled input, the server's host machine is fully compromised.

Network Exposure: The "NeighborJack" Problem

The single most common vulnerability Backslash found across hundreds of cases was MCP servers explicitly bound to all network interfaces (0.0.0.0), making them accessible to anyone on the same local network. Their research team calls this the "NeighborJack" vulnerability.

The analogy is straightforward: imagine working in a coworking space with your MCP server silently running on your machine. Anyone on the same Wi-Fi can access it, impersonate tools, and potentially run operations on your behalf. It's like leaving your laptop open and unlocked for everyone in the room.

Combined with excessive permissions, this creates a perfect storm — network-accessible servers that also allow arbitrary command execution mean a remote attacker can go from network access to full system control in a single step.

What Makes MCP Security Different From Traditional API Security

Traditional API security focuses on authentication, rate limiting, and input validation at well-defined endpoints. MCP security requires all of that — plus an entirely new layer of concerns:

| Traditional API Risk | MCP-Specific Risk |

|---|---|

| SQL injection via user input | Prompt injection via LLM-constructed queries |

| Unauthorized endpoint access | Tool poisoning via metadata manipulation |

| Token theft from API responses | Credential exposure through MCP config files |

| DDoS on API endpoints | Rug pull attacks — tool behavior changes after initial approval |

| Supply chain via dependencies | Supply chain via MCP server registries |

As Datadog's analysis points out, tool poisoning attacks can force a client to read a host's sensitive files, such as MCP server configuration files (~/.cursor/mcp.json) and SSH keys. The configuration file itself becomes an attack target because it typically contains the credentials for connecting to databases and services.

Practical Mitigation: Here Is What We Recommend

Based on the research findings across 1,808 servers and guidance from OWASP, CyCognito, and Prompt.security, these are the highest-impact steps:

1. Sandbox Every MCP Server

OWASP recommends sandboxing MCP servers as a baseline practice. This limits the blast radius when — not if — a vulnerability is exploited. A sandboxed server that allows arbitrary command execution is still dangerous, but the damage is contained to the sandbox rather than the entire host.

2. Bind to Localhost, Not 0.0.0.0

Hundreds of servers in the research were exposed to the entire local network unnecessarily. Binding MCP servers to 127.0.0.1 instead of 0.0.0.0 eliminates the NeighborJack attack class entirely — a one-line fix with massive security impact.

3. Validate Inputs at Every Boundary

Parameterized queries for database access and strict input sanitization for shell commands are non-negotiable. This applies to both direct user inputs and LLM-generated inputs — the AI agent's output should be treated as untrusted when it reaches the MCP server.

4. Eliminate Token Passthrough

MCP servers should never forward client-provided tokens directly to downstream APIs. Tokens and API keys must be explicitly issued to the MCP server and used only by that server. This preserves rate limiting, auditing, and the ability to detect abuse.

5. Scan With MCP-Aware Tools

General-purpose static analysis misses MCP-specific risks. Tools like Invariant Labs MCP-Scan, Enkrypt AI's MCP Scanner, and CyberMCP are built to detect tool poisoning, prompt injection vectors, and MCP-specific misconfigurations that traditional scanners overlook.

6. Sign and Verify MCP Components

CyCognito emphasizes that developers must sign MCP components to allow users to verify integrity. Build systems should include static analysis (SAST) and software composition analysis (SCA) to catch vulnerabilities in both code and dependencies before deployment.

7. Enforce Authentication — Always

According to Prompt.security, weak or misconfigured authentication mechanisms across MCP environments enable attackers to bypass security controls, impersonate legitimate users, and gain unauthorized access. Multi-factor authentication and regular security audits are essential, not optional.

Choosing an MCP Security Platform

The tooling landscape for MCP security is maturing quickly. Here's an honest comparison of the main categories:

- Comprehensive platforms like MCP Manager provide centralized gateway control, observability, and granular admin controls for enterprise environments. Best suited for organizations running multiple MCP servers at scale.

- Specialized scanners like Enkrypt AI and Invariant Labs MCP-Scan focus on detecting MCP-specific threats — tool poisoning, rug pulls, prompt injection. Better for teams that need targeted vulnerability detection in CI/CD pipelines.

- Lightweight gateways like MCP Total offer foundational logging and guardrails against data exfiltration. Good for smaller teams starting their MCP security journey.

- Security checklists like Slowmist MCP Security Checklist provide manual audit frameworks based on real-world security audit findings. Useful as a baseline assessment before investing in automated tooling.

Key takeaway for business: start with a scan of every MCP server currently in use. The research shows that 66% have findings — so assume yours does too until proven otherwise. Automated scanning integrated into CI/CD catches problems before they reach production.

The Cost of Ignoring MCP Security

Every MCP server connected to an AI agent is a trust boundary. When that boundary is breached, the AI agent becomes the attacker's hands — reading files, executing commands, and accessing systems with whatever permissions the server grants. Unlike a traditional breach where an attacker must navigate the environment manually, a compromised MCP server gives the attacker an autonomous agent that already knows how to use every connected tool.

With 427 critical vulnerabilities found across 1,808 servers — including tool poisoning that can redirect AI behavior invisibly and network exposure that puts servers within reach of anyone on the same Wi-Fi — the risk is concrete and immediate.

What this means for your project: if your team is building or deploying AI agents with MCP integrations, security auditing is not a future concern. It is a prerequisite. The numbers make the case clearly — treat MCP server security with the same rigor as production database security, because the access level is comparable.

Frequently Asked Questions

How can I detect tool poisoning attacks in my MCP servers before they cause damage?

Use MCP-specific scanning tools like Invariant Labs MCP-Scan or Enkrypt AI's scanner, which analyze the gap between tool descriptions and actual implementation. Traditional static analysis tools miss these vectors because they don't understand AI agent behavior patterns. Integrate scanning into your CI/CD pipeline so every commit is checked automatically.

What's the difference between tool poisoning and tool shadowing attacks in MCP?

Tool poisoning embeds malicious instructions in a tool's metadata or hidden description fields, tricking the AI into performing unintended actions while the tool appears legitimate. Tool shadowing (also called rug pull attacks) involves a tool that behaves correctly during initial review but changes behavior after approval — the tool's functionality shifts post-deployment. Both exploit the trust the AI agent places in tool definitions, but shadowing is harder to catch because the tool is genuinely safe at scan time.

Why do MCP servers with network exposure and excessive permissions create a "perfect storm" for attackers?

Network exposure (binding to 0.0.0.0) means anyone on the local network can reach the server. Excessive permissions mean the server can execute arbitrary commands on the host. Combined, an attacker goes from Wi-Fi access to full system control without needing any credentials — the server itself provides both the entry point and the execution capability.

How do I audit MCP tool permissions to ensure they follow the principle of least privilege?

Review each tool's access scope: what files it can read, what commands it can execute, what APIs it can call, and what tokens it holds. Remove any permissions not strictly required for the tool's stated function. CyCognito recommends prohibiting token passthrough entirely and ensuring each MCP server has only its own explicitly issued credentials, not forwarded client tokens.

Can malicious actors exploit MCP servers differently than direct LLM attacks, and what expanded attack surface does this create?

MCP servers expand the attack surface beyond the LLM itself by providing direct access to file systems, databases, APIs, and system commands. An attacker who compromises an MCP server doesn't need to manipulate the LLM's reasoning — they can inject malicious tool definitions, intercept data flows, or execute commands directly. As Practical DevSecOps notes, LLM vulnerabilities like adversarial prompt manipulation combine with MCP server threats to amplify the overall risk, making the ecosystem more susceptible to sophisticated exploits than either component alone.

This article is based on publicly available sources and may contain inaccuracies.